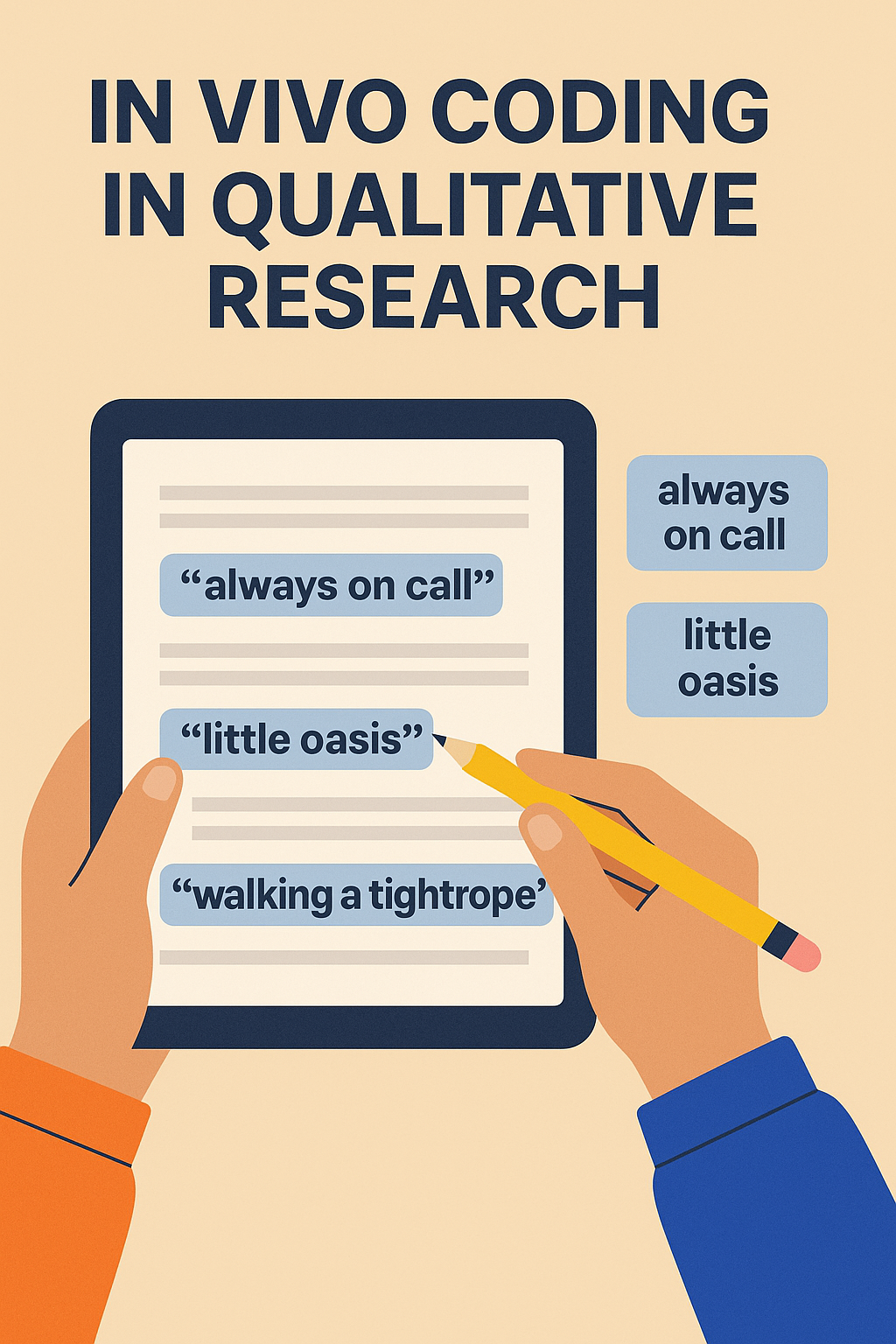

Imagine scrolling through a transcript and pausing at a phrase that stops you in your tracks: “I’m always on call,” “like a little oasis,” or “walking a tightrope.” These aren’t just quotes—they’re insight-infused phrases waiting to guide your analysis. In vivo coding unlocks these moments, making participant terminology the actual lenses through which you view your data.

At its core, in vivo coding means using participants’ exact words or short phrases as codes—no translation, no abstraction. Like a linguistic mirror, these codes preserve meaning, cultural nuance, and emotional weight that researcher-driven labels might dilute. It’s an inductive, grounded theory approach helping you stay true to lived experiences.

Tools like UserCall support in vivo coding by letting you highlight quotes directly and pull quotes automatically from transcripts during AI-assisted analysis. These quotes can be tagged, grouped, and thematically connected—while preserving the exact language that gave rise to the insight. The tool also helps uncover recurring phrases across sessions so you can stay grounded in what users actually say, even when working with dozens or hundreds of responses.

Here are three real-world examples where in vivo coding brings vivid participant insights to life:

“I feel like I’m always on call.”

Coded literally, this phrase reveals the blurred boundaries of remote work culture.

“Little oasis”

Through this small phrase, gardeners express their sanctuary-seeking behavior in concrete terms.

“Dropping the ball” / “Like a family”

These phrases signal emotional frameworks (responsibility, belonging) that emerge organically from participants, not predefined scales.

Use it when you're:

Skip or limit in vivo coding when:

AI assisted qualitative analysis tools like Usercall allowsyou to:

It’s especially helpful when you're handling multiple interviews and want to surface repeated language fast, without skipping the richness of human speech.

A hybrid coding approach balances the power of in vivo with analytical flexibility:

⚡ UserCall’s AI can suggest code groupings or synthesize themes, but starting with in vivo codes ensures your foundation is built on user voice—not assumptions.

| Pitfall | Result | How to Avoid |

|---|---|---|

| Too long phrase as code | Diffuse, hard to compare | Stick to 1–5 words |

| Removing context | Misinterpretation | Keep timestamp/full quote reference |

| Over-proliferation of codes | Fragmentation, clutter | Group early; collapse similar codes |

| Researcher-over-coding | Imposing voice; losing authenticity | Favor their phrasing in initial rounds |

In a healthtech study, multiple caregivers described managing meds:

“It’s like walking a tightrope.”

This phrase wasn’t just poetic—it framed their emotional journey: tension, risk, error fear. Recognizing it as a core in vivo code shifted product strategy: onboarding changed to include visual safety nets and messaging shifted toward support and reassurance.

Try this in a spreadsheet or coding tool:

| Excerpt | In Vivo Code | Theme / Notes |

|---|---|---|

| "I never know if the ETA is real." | "ETA is real" | Trust in delivery |

| "I just disappeared." | "I disappeared" | Ignored by customer service |

| "Little oasis in the noise." | "little oasis" | Urban sanctuary |

Start with 5–10 transcripts, tag exact phrasing that sticks out, and let patterns emerge from the ground up.

In vivo coding relies heavily on working directly with participant language, making usability and quote handling critical. Tools differ significantly in how easily they support quote-based workflows at scale.

Some platforms offer strong in vivo support but introduce steep learning curves or higher costs as projects grow. Others simplify coding but limit flexibility for advanced qualitative methods. Understanding these trade-offs is essential before committing to a tool.

Comparing NVivo vs ATLAS.ti, reviewing NVivo pricing, and scanning broader qualitative analysis software comparisons helps teams choose software that supports in vivo methods without inflating cost or complexity.

In vivo coding is more than a technique—it’s a mindset. A commitment to listening first and labeling second. When you use a tool like UserCall to scale that practice across interviews, it becomes possible to extract meaningful, human insights at scale without sacrificing nuance.

Remember: The most memorable insights often come from the exact words people use. Let them guide the analysis—and let your coding process stay rooted in the truth of lived experience.

If in vivo coding is part of your qualitative toolkit, pairing it with the right software makes a real difference. Check out our comparison of top thematic analysis coding software to find tools that support flexible, participant-centered coding, or explore Usercall to see how AI-moderated interviews can generate richer raw data to work from.

In vivo coding means using participants' exact words or short phrases as codes during qualitative analysis, with no translation or abstraction. It acts as a linguistic mirror, preserving cultural nuance, emotional weight, and lived experience that researcher-driven labels might dilute. It is rooted in inductive, grounded theory methodology.

Real-world in vivo code examples include 'always on call' from remote workers describing blurred work boundaries, 'little oasis' from urban gardeners expressing sanctuary-seeking behavior, and 'dropping the ball' or 'like a family' from team members revealing emotional frameworks around responsibility and belonging.

Use in vivo coding when conducting open coding in grounded theory studies, working with interviews or focus groups where phrasing matters, preserving cultural specificity or emotional tone in narratives, or capturing quotable phrases that bring analysis to life. It works best in inductive studies where participant language drives meaning.

Avoid in vivo coding when synthesizing across large datasets where too many one-off phrases create fragmentation, when using structured research instruments where topic-focused codes serve better, or when your analysis is ready for theory-building and abstraction using descriptive, value, or axial coding instead.

Start by transcribing faithfully and highlighting emotionally loaded or recurring phrases. Quote phrases directly as in vivo codes, then cluster similar ones together — for example, 'always on call' and 'never logged off' cluster into boundary erosion. Add descriptive or value codes, then abstract clusters into higher-level themes like work–life tension.

A hybrid coding approach uses in vivo coding in round one to preserve authenticity and catch nuance, descriptive or value codes in round two to group ideas meaningfully, and axial or thematic coding in round three to build connections across the data, balancing participant voice with analytical structure.

Yes. AI-assisted tools like UserCall let researchers upload voice or text interviews, auto-transcribe and segment by speaker, highlight and tag in vivo codes directly from transcripts, track how often specific phrases appear across participants, and group codes visually to identify emerging clusters without losing the authenticity of participant language.

If you're weighing in vivo coding against other approaches, it helps to see how modern tools handle the process at scale. Check out our roundup of the best AI thematic analysis tools in 2026 to see which ones preserve participant language rather than flattening it. Usercall is built specifically for interview research and keeps you close to the raw data throughout.

Related: grounded theory vs thematic analysis · how to master data coding in qualitative research · automated qualitative coding with AI